Light regulation is now active

Apologies for the long absence of information regarding my Plant watering project Today I hung up a lamp above my plant in order to provide it with enough light as …

ReadMy shared experiences and advice in software

Apologies for the long absence of information regarding my Plant watering project Today I hung up a lamp above my plant in order to provide it with enough light as …

Read

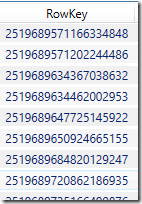

Sometimes, all you want is to be able to quickly get to the last value of a sensor, or the freshest product in your Azure Table without having to do …

Read(Planning the project) Hi all, and sorry for the slow updates. My new job has had me so busy that I have not found any time at all to work …

ReadGrowing concerns about the direction of Xaml-based applications Microsoft, what the hell do you think you are doing by diverging WPF, Silverlight and Silverlight for WP7?? None of those 3 …

ReadA quick introduction to how you can get up and running with Microsoft Azure – It is a hands-on guide into creating a silverlight application that uses a REST api …

ReadIn this post, I explain how you can unit-test your Silverlight and Windows Phone 7 class libraries using MSTest and the built-in test system of VS2010, enabling you to right-click …

ReadI’m an avid blog reader/commenter and have seen the rise of a wave of rants about Microsoft’s LightSwitch and Microsoft WebMatrix. These are products designed to make writing windows applications …

ReadTechnorati Tags: Code,MVVM,TDD I am an avid adopter of the Model-View-ViewModel pattern for designing applications. It is a sleek, very testable way to write software, but it has one major …

ReadFigured I need to share with you how I normally go about designing UI, and how I make that design as testable as possible. To make things easy, I will …

ReadThis post is written for .Net, using C#, but the methods in use should be fairly easy to implement in most other computer languages. Background I’ve often looked for …

Read