How I do error handling in my REST APIs

As I make more and more REST APIs, I find the need to bubble up an object that will translate to an Http 200 OK result or an RFC 7807 …

ReadMy shared experiences and advice in software

As I make more and more REST APIs, I find the need to bubble up an object that will translate to an Http 200 OK result or an RFC 7807 …

Read

This is a reminder to myself more than anything else. It is not often that I get to greenfield a new project. Most of the time, the solution already exists, …

Read

This is another blog post in the series of “Things that gave me plenty of grief, but eventually got solved”. I was working on a component that utilizes the Azure …

ReadIn this post, I explain how you can unit-test your Silverlight and Windows Phone 7 class libraries using MSTest and the built-in test system of VS2010, enabling you to right-click …

ReadI’m an avid blog reader/commenter and have seen the rise of a wave of rants about Microsoft’s LightSwitch and Microsoft WebMatrix. These are products designed to make writing windows applications …

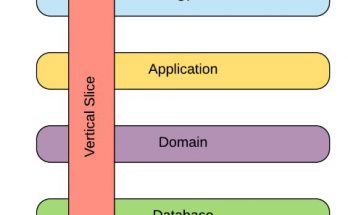

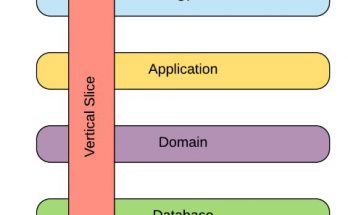

ReadFigured I need to share with you how I normally go about designing UI, and how I make that design as testable as possible. To make things easy, I will …

ReadThis post is written for .Net, using C#, but the methods in use should be fairly easy to implement in most other computer languages. Background I’ve often looked for …

Read